Built for your team

API platform teams

API product teams

API developer teams

PLATFORM

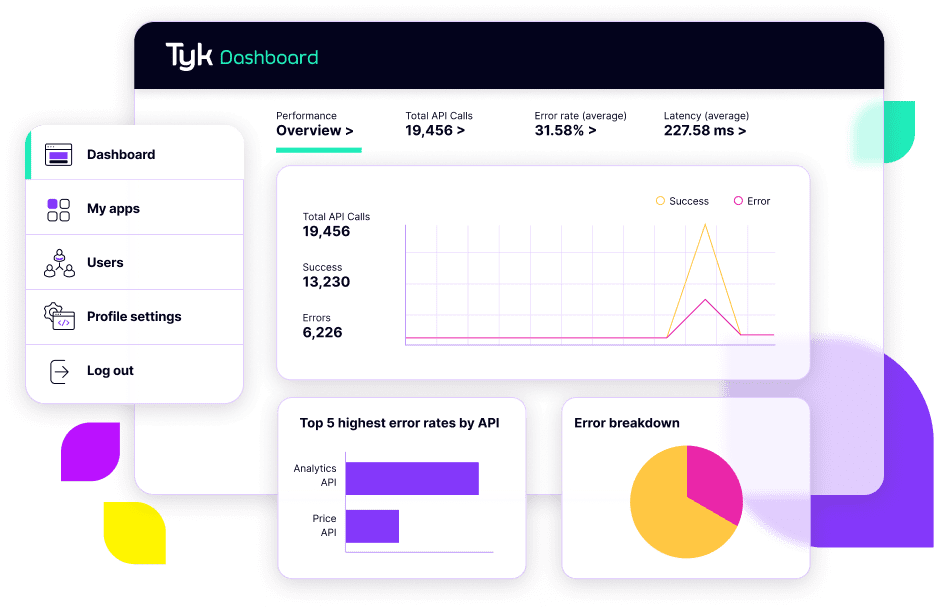

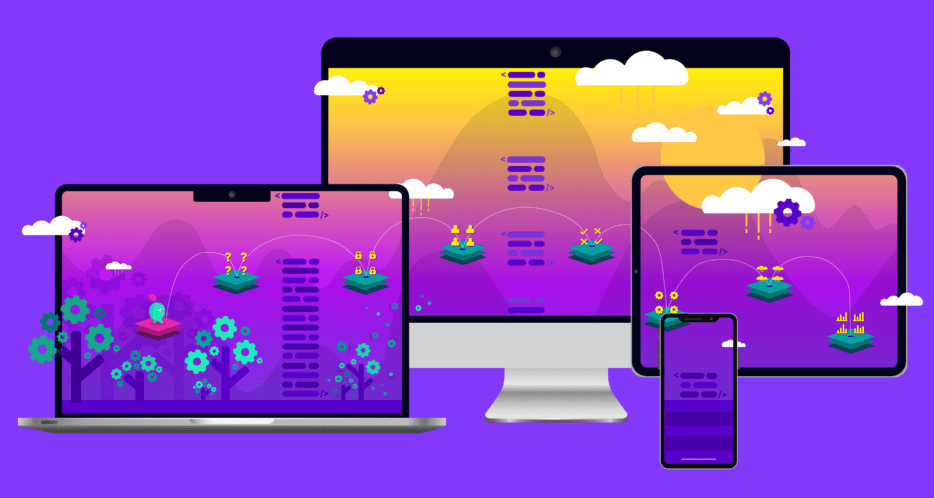

Throw it all at Tyk

Say goodbye to complexity and hello to the API management that’s proven billions of times a day. We are not just another platform; we redefine the way you work with APIs, unlocking their true potential. Our resilience and adaptability allow developers to trust the platform, throwing everything into their projects with unfettered confidence. This is development, unleashed.

-

Grow without limits

From managing a few APIs to navigating massive traffic, we handle billions of critical API calls daily, from international payments to satellite navigation. With Tyk, you’re unlocking new efficiencies, business models, and limitless growth potential.

-

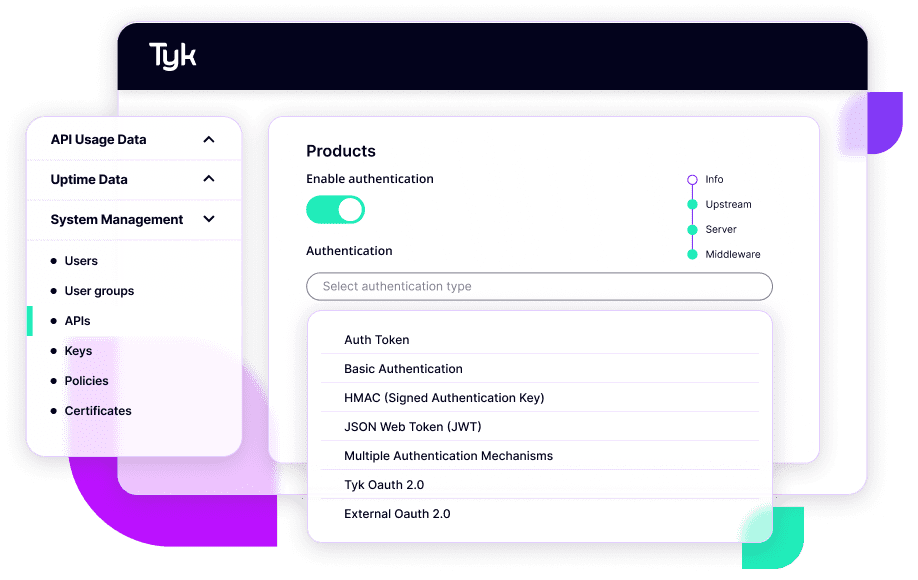

Security at the core

Your data is our top priority. Tyk’s API Management solution provides a secure framework, including encryption, access control, and authentication. Rest easy knowing your data is safe with Tyk.

-

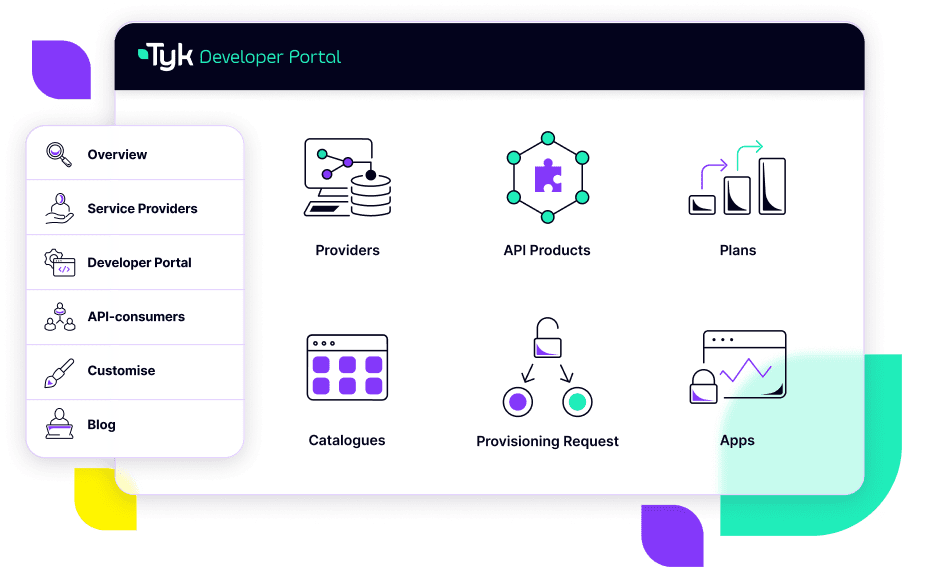

Make Tyk your own

Tyk’s API Management platform is designed to fit your business, not the other way around. Integrate with existing systems or customise workflows to meet your specific needs. With Tyk, it’s all about you.

BUILT FOR DEVELOPERS

Become the hero your devs need

Are you frustrated by the limitations of your current API management system? Tyk gives you complete control over your API system, whether you choose to deploy in the cloud, Kubernetes, or on-premises. Say goodbye to complex API management and hello to the freedom to create, innovate, and scale your APIs at will. With Tyk, you’re in charge; you’re the boss.