AI seems to be everywhere in the tech space at the moment. You can’t avoid it, it’s at the peak of the hype-cycle, on the cusp of sliding into the trough of disillusionment. But will it? At the pace AI vendors are innovating and improving, the hype-train might outrun the hype-cycle to actually live up to its own expectations. Who really knows?

AI is everywhere, and it is hyped

What we do know is that AI, specifically generative AI (that’s the one that can ace the bar exam, generate works of art in the style of any artist, and mimic our voices and faces with ease) is being eyed by every industry as a path to increased productivity.

This is a good thing. I’m pretty sure everyone would like to have an easy, guilt-free helping hand to get them through their duller tasks, writing repetitive code, structuring a quick social media post or outlining a blog post. Generative AI is incredibly powerful when it comes to assisting folks who are already good at their jobs at being even more effective.

The challenges facing organisations that want to use AI

What is clear is that to really embrace the productivity gains and potential cost-savings from AI, organisations need to really get under the hood of what it means to use and implement an AI strategy.

In general, an individual using ChatGPT, or DALL-E, or Stable Diffusion, is not so concerned about the information they are sending out into the jaws of the language model. And, more importantly, neither are they concerned about the black-hole of data consumption that is its training and benchmarking data lake owned by the vendor. The very data that is critical to consistently improving the quality of the underlying technology.

For an organisation to adopt AI, this poses a real issue. Not just from a confidentiality and trade secrets perspective, but from a boring old data governance, privacy, and accreditation perspective. Are you an ISO9001 organisation? AI-as-a-Service is a problem. Are you a PII or financial processor (e.g. HIPAA or PCI)? AI-as-a-Service is a problem. Are you ISO 27001 certified? Nope, not for you! SOC2? Ah-ah-ah, you didn’t say the magic words! Are you in the EU and allowing folks to sign up using their real name? Oof! GDPR might get you in trouble when it comes to AI.

What I’m trying to say is that adoption of AI into your organisation comes with a plethora of pitfalls when it comes to overall data governance.

Key challenges when adopting AI tools

A common counter-argument is that ultimately, using AI as a Service is very similar to using services like Google Drive, Amazon S3 or Azure Cloud to store documents. However it isn’t, really. You have a responsibility to your customers to safeguard their data, and in turn a repository of data that is not processed or read by the vendor is not the same as a repository of data that is eventually literally baked into the vector-matrices of a large-language model.

The key challenges that face an organisation when wishing to adopt AI are:

- What data am I going to make available to the AI? This isn’t just initial training data, or contextual data (“embeddings” in the lingua-franca of the biz), but even just the input question itself: imagine sending a customer support ticket directly to an AI – that’s your customer’s name, email address, plus whatever is in the support email body itself that could be PII or sensitive.

- Who will be using these AIs in my organisation? What level of data access do they have?

- How will my staff be using these AIs? Are they using them for the good of the company or are they abusing (sometimes expensive) access for personal gain?

- How can AI Hallucinations be controlled? AI algorithms are opaque and can result in unexpected outputs. How can my organisation best protect against these creating negative outcomes?

The answer: Robust Governance.

Introducing Montag, Tyk’s AI governance tool

Now, I am pretty stoked about AI, so much so that I wrote a memo to the wider company telling our folks to go off and embrace AI tools, to use their good judgement and only focus on things that are public facing (I then promptly got told off by both Legal and IT).

Regardless, the genie was out of the bottle, and we needed to get serious. So we built Montag.

Montag.ai is our internal AI governance tooling used by our Platform Team, it enables us to to:

- Centralise access credentials to AI vendors, be that commercial offerings or in-house ones that we run ourselves on our own hardware

- Log and measure how AI is being used internally

- Build and release Chatbots for our internal Slack channels

- Ingest data into our knowledge corpus, with siloed access settings

- Build AI functions (Smart Functions) as a Service for common, single-task automations (e.g. summarise this article) or modulated pass-through to upstream AI’s with query inspection to provide context-aware access control.

- Script complex multi-modal assistants that can selectively determine which AI vendor, model, and data set to use depending on the nature of the user input

- Full-blown RBAC to ensure we can democratise access to LLMs without impinging on our security obligations

It does a whole bunch more than that, but ultimately it solved our key issues for us:

- What data am I going to make available to the AI? Our internal assistant (Wadsworth), will identify internal links and data submitted by the end user and direct these solely to self-hosted models. While other sensitive data (not PII), can be sent to vendor AIs that we have an Enterprise agreement with.

- Who will be using these AIs in my organisation? With fine-grained access controls, we can ensure that only specific users have direct access to data repositories (and can write and read from them), and by providing pass-through endpoints for raw AI access (e.g. a developer wants to PoC on GPT-4), we can provide specific, monitored internal API endpoints and internal API credentials to provide safe access.

- How will my staff be using this AI? Access to smart functions, AI endpoints, and bots can be strictly controlled through a combination of RBAC, and internally generated API tokens.

- How can AI hallucinations be controlled? By using an AI bot pipeline to verify generated content through a sequence of bots designed to specifically validate content. We have our primary Q&A bots use another bot to check their answer and rewrite it.

For API-level access, our platform team will use our internal Tyk APIM stack to provide tokens, rate limits and endpoint-specific access to the Montag Smart APIs. Which brings me to my next point…

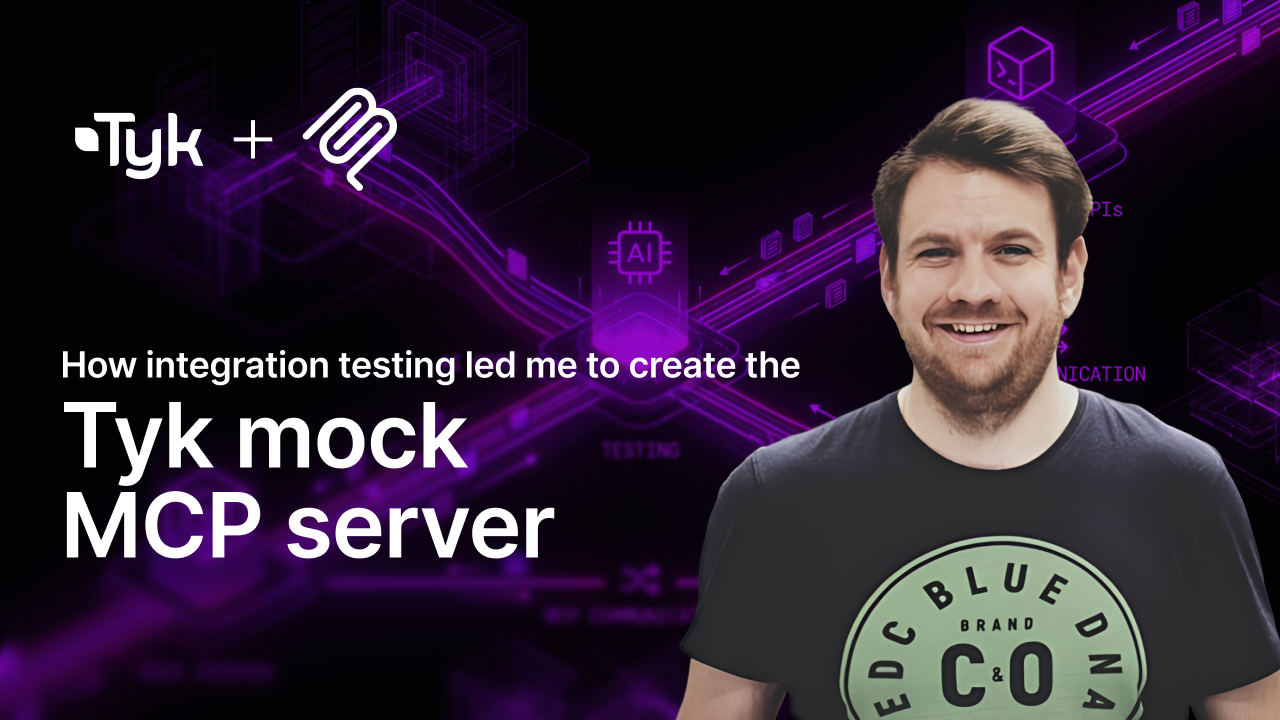

How we see the intersection of APIM and AI, in the most realistic case

AIs are APIs. While chat is seen as the universal interface, what makes AI so powerful is the programmatic access – whether it’s a consumer using AIs to enable a new capability, or the AI’s themselves calling APIs to enhance their own responses (viz. ChatGPT Function calling), and this functionality is fundamentally powered by APIs.

Specifically: internal APIs, APIs that give access to your customer data. Your sales data, your warehouse inventory – whatever it takes to make the AI as useful, as productive, and as accurate as possible.

While many organisations in the API management space are focussing on shoe-horning AI into their products, we very much see AI as an enabler for business value, and APIM as an enabler for that. A significant factor in the recent rapid adoption of AI tools has been the method through which they are packaged and consumed, with APIs playing a central role.

API management enables AI-as-a-Service

The intersection of APIM and AI is that platform teams should be using APIM to provide AI-as-a-Service to their internal customers, and should be using APIM to mediate and monitor how their products – enhanced with AI – provide value to their customers.

So, instead of announcing some shoe-horned AI feature for our APIM stack, we’re instead going to release our Montag.ai stack as a product to empower platform teams and product teams adopt AI quickly, easily, and most importantly, ensure robust AI governance for their organisations.