MCP is entering its enterprise adoption phase

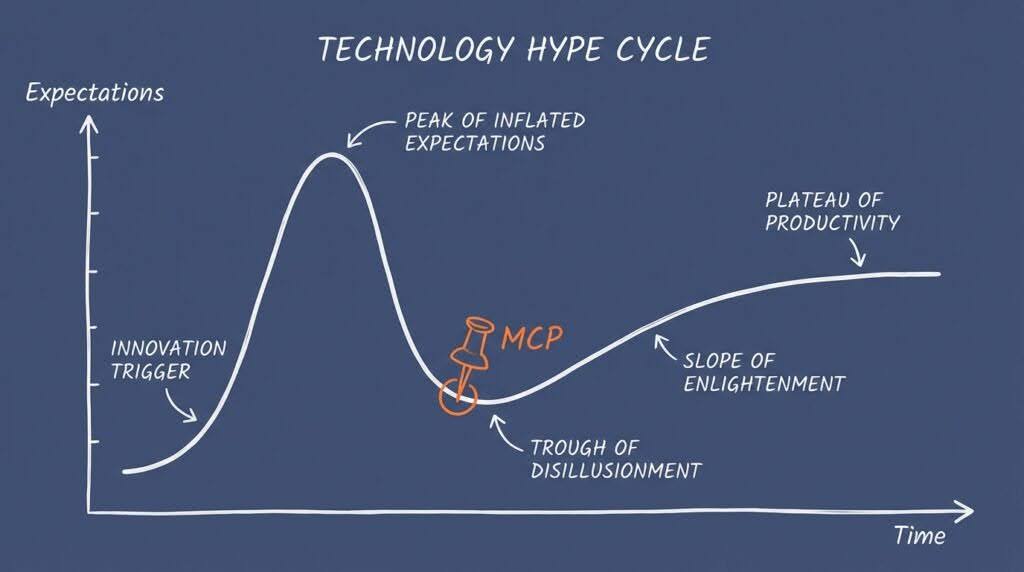

MCP isn’t dead. It’s moving through a textbook Gartner Hype Cycle, from peak inflated expectations into the trough of disillusionment. This is where serious enterprise adoption begins.

The “MCP is dead” narrative comes primarily from solo developers and small teams who never needed MCP’s core value proposition: auth, audit trails, and governance at scale. The April 2026 news that MCP has a design weakness that “enables Arbitrary Command Execution (RCE) on any system running a vulnerable MCP implementation” also hasn’t helped.

Yet enterprise signals are strong. Google launched fully-managed remote MCP servers on Cloud. Tyk shipped an enterprise AI management platform with an AI gateway at its core. Others have followed suit.

We can see the direction of travel in the 2026 MCP roadmap, which makes enterprise readiness a top-four priority, including OAuth 2.1 authentication.

Is MCP actually dead in 2026?

No. Model Context Protocol is not dead, it’s just exiting its hype phase and entering the phase where enterprises actually ship with it.

Perplexity CTO Denis Yarats announced at Ask 2026 on March 11, 2026, that Perplexity was moving away from MCP, citing 72% context window consumption for MCP tool definitions. Within days, “MCP is dead” became a trending take across developer forums. Analysis from Scalekit backed the efficiency argument: MCP costs 4-32x more tokens than CLI equivalents for individual use cases.

But efficiency for a single developer is not the same problem as governance for 50 agents running across a department. The criticism is valid on its own terms but completely misses the point of why standardization matters in the AI supply chain.

Glama published a detailed analysis titled “We’re Entering the Real Adoption Phase of the MCP Hype Cycle,” arguing that the backlash signals maturity, not failure. Meanwhile, CData declared 2026 to be the critical year for enterprise-ready MCP adoption. These aren’t outlier positions – they reflect what platform teams building AI agent infrastructure are actually experiencing.

Why are developers saying MCP is dead?

The backlash against MCP is coming almost exclusively from individual developers optimizing for personal productivity. Their complaints are legitimate but narrowly scoped. Their core criticisms are:

- Token overhead: MCP tool definitions consume significant context window space. For a solo developer with a coding agent, a direct CLI call is cheaper and faster.

- Complexity for simple use cases: If you have one agent calling one API, MCP adds a protocol layer you don’t need.

- Early-stage friction: MCP tooling in 2025 was rough. Servers crashed, documentation was thin, and the developer experience lagged behind direct integrations. Generally speaking, MCP was a nightmare in its early form.

A Hacker News thread (295 points, 205 comments) on this topic revealed a clean split. User CharlieDigital captured it: “Do you work in a team context of 10+ engineers? Do you all use different agent harnesses? Do you need telemetry?” If not, MCP probably isn’t for you, and that’s fine.

User kasey_junk made the broader point: “The CLI focus is an artifact of coding agents being the tip of the iceberg for LLM agent use cases.” Coding agents are the most visible AI agent category in 2026, but they represent a fraction of where agent deployments are heading: customer service, internal ops, data pipelines, and compliance workflows. Those use cases have different requirements.

What is the MCP hype cycle?

MCP is following the Gartner hype cycle pattern with textbook precision:

| Stage | Timeframe | What happened |

| Innovation trigger | Late 2024 | Anthropic launches MCP as an open protocol for connecting AI agents to tools and data sources. |

| Peak of inflated expectations | Mid 2025 | MCP branded “USB-C for AI.” Mass adoption across dev tools. Every AI startup adds MCP support |

| Trough of disillusionment | Early 2026 | Perplexity drops MCP. Token cost analyses published. “MCP is dead” hot takes proliferate. |

| Slope of enlightenment | 2026-2027 | Enterprise hardening: OAuth 2.1 auth, gateways, audit trails, governance. |

| Plateau of productivity | 2027+ | MCP becomes standardized enterprise AI agent infrastructure, invisible plumbing. |

The trough is where weak technology dies and strong technology gets hardened. HTTP, REST, and OAuth all went through this. The question is not whether MCP is in the trough because it clearly is. The question is whether the enterprise demand signal is strong enough to pull it through.

Based on the data, and on what we see in production deployments across Tyk customers, yes it is.

Why do enterprises still need MCP?

Enterprises need MCP because the alternative (agents calling tools directly via CLI or raw API calls) creates security, governance, and operational problems that are invisible at small scale and catastrophic at large scale.

The key point is this: When an agent runs CLI commands, it runs as you, with the same permissions and no audit trail. It can leak secrets via curl to any endpoint. When you run 50 agents across a department, each of which needs keys for Slack, Jira, GitHub, you’ve effectively recreated the pre-SSO world at machine speed.

MCP vs direct CLI/API calls for enterprise use cases

| Capability | MCP | Direct CLI/API |

| Authentication | OAuth 2.1 spec, SSO-integrated, per-agent scoping | Agent runs with user’s full credentials |

| Audit trails | Centralized logging of every tool invocation | No standardized logging; varies by tool |

| Permission scoping | Granular per-tool, per-agent permissions | Agent gets whatever the user has |

| Multi-agent governance | Centralized gateway controls which agents access what | Each agent configured individually |

| Configuration portability | Standardized server configs across teams | Custom scripts per team, per tool |

| Secret management | Secrets stay server-side, never in agent context | Secrets passed to agent, risk of leakage |

| Token efficiency | Higher overhead per call | Lower overhead per call |

| Setup complexity | Higher initial setup | Lower initial setup |

The last two rows are where MCP critics are right: MCP does cost more tokens and takes more setup. For a single developer, that tradeoff is bad. For an enterprise with 200 agents across 15 teams, the first six rows matter far more, with MCP proving a natural fit for enterprise API auditing and domain borders, and centralized MCP servers solving issues that simply don’t matter to individual developers (such as brand guardianship, tone of voice, and domain context problems).

This matches what we’ve observed firsthand with teams adopting AI agents at scale. WorkOS analysis confirms the pattern as enterprises deploying MCP consistently running into four requirements: audit trails, SSO-integrated auth, gateway behavior, and configuration portability. These aren’t MCP problems; they’re enterprise infrastructure problems, and MCP is becoming the protocol layer that addresses them.

Noma Security flagged that near-complete lack of standardized audit trails is a top blindspot in current AI agent deployments. MCP, with gateway infrastructure and API management platforms such as Tyk, provides the control plane to close that gap.

What does the 2026 MCP roadmap address?

The 2026 MCP roadmap, outlined by David Soria Parra at Anthropic, makes enterprise readiness a top-four priority. Key items include:

Authentication and authorization: MCP now includes an OAuth 2.1 authentication specification. This moves MCP from “trust the agent” to “verify every request” as a prerequisite for any enterprise deployment. Agents authenticate to MCP servers the same way applications authenticate to APIs, using established standards rather than custom token-passing. Tyk’s governance workflows support these patterns natively.

Streamable HTTP transport: Replacing the earlier stdio-based transport with HTTP-based communication makes MCP deployable in cloud environments, behind load balancers, and through API gateways. This is the architectural shift that enables remote, managed MCP servers.

Tool governance and observability: Vertex AI Agent Builder added enhanced tool governance capabilities. Autodesk contributed directly to shaping MCP security specifications for enterprise environments. These are not theoretical roadmap items but shipping features driven by production requirements.

Ecosystem maturity: Google launched fully-managed remote MCP servers on Google Cloud. Tyk launched Tyk AI Studio. These are infrastructure investments that signal long-term commitment, not experimentation.

Hacker News user phpnode noted the accessibility angle: “MCP lets less-technical people plug tools into agents with a 10-second auth flow.” As MCP matures, its audience expands beyond developers to operations teams, analysts, and business users who need agent capabilities without writing integration code.

How does Google’s A2A protocol relate to MCP?

Google’s Agent-to-Agent (A2A) protocol is complementary to MCP, not competitive. They address different layers of the AI agent stack, with A2A handling agent-to-agent communication and MCP handling agent-to-tool connectivity.

| Protocol | Purpose | Scope |

| MCP | Agent-to-tool connectivity | How an agent accesses tools, data sources, and APIs |

| A2A | Agent-to-agent communication | How multiple agents coordinate, delegate, and share results |

An enterprise deployment might use MCP to connect agents to internal tools (databases, CRMs, ticketing systems) and A2A to coordinate workflows between specialized agents (e.g. a research agent hands off to a summarization agent, which hands off to a publishing agent).

The MCP vs A2A framing is a false dichotomy. Production AI agent architectures will use both, the same way web applications use both HTTP and WebSockets as different protocols for different communication patterns.

How MCP and API gateways create enterprise-grade AI systems

Enterprise-grade AI systems are increasingly built by combining MCP with API gateways to create secure, modular, and scalable architectures.

- MCP standardizes how AI models access external tools, data sources, and services, acting as a “plug-and-play” interface between models and enterprise systems.

- API gateways sit in front of these interactions, enforcing authentication, rate limiting, observability, and policy control.

Together, MCP and API gateways decouple the AI layer from backend complexity while ensuring governance, reliability, and compliance. This is critical for production environments where models must safely interact with sensitive business data and services.

Solutions such as Tyk Gateway illustrate this pattern well. The gateway manages and secures all API traffic (including MCP endpoints), while providing analytics, access control, and versioning. This allows organizations to expose AI capabilities as managed services, integrate them with existing microservices, and scale usage without compromising security or performance.

What should API and platform teams do now?

If you manage API infrastructure or platform engineering for a team deploying AI agents, here’s a concrete action plan:

- Audit your current agent-to-tool connections. Map every tool, API, and data source your agents access. Identify which connections have audit trails and which don’t. Noma Security’s finding of a near-complete lack of standardized audit trails likely applies to your environment.

- Evaluate MCP gateway options to serve as the control plane layer between agents and tools. A gateway gives you auth, rate limiting, logging, and policy enforcement without modifying individual tool integrations.

- Implement OAuth 2.1 for agent authentication. The MCP OAuth 2.1 spec is available now. Move away from static API keys passed to agents. Every agent should authenticate with scoped, rotatable credentials – the same standards you already enforce for human users and service accounts. Tyk’s API governance workflows support these patterns natively.

- Separate agent permissions from user permissions. An agent acting on behalf of a user shouldn’t inherit that user’s full access. MCP servers enable granular permission scoping per tool, per agent. This is the same principle as least-privilege access, applied to AI agents.

- Plan for multi-protocol agent architectures, with MCP for agent-to-tool and A2A for agent-to-agent. Your API gateway layer should be able to manage traffic for both patterns as your agent fleet grows.

As API governance in the age of AI demands designing for both human and machine consumers, 91% of brand mentions in AI responses trace to third-party sources. The content your MCP servers expose to agents shapes how AI systems represent your organization. Treat MCP server configuration as a content governance problem, not just an infrastructure one. And remember that MCP itself isn’t a strategy – it’s a tool.

FAQ

Is MCP being replaced by Google’s A2A protocol?

No. MCP and A2A solve different problems. MCP connects agents to tools and data sources. A2A enables agents to communicate with each other. Google has explicitly positioned them as complementary. Most enterprise AI architectures will use both protocols.

Is MCP too expensive in token usage for production use?

For individual developers with single agents, MCP does consume more tokens than direct CLI calls (Scalekit measured 4-32x higher token costs). For enterprise deployments where auth, audit, and governance are requirements, the token overhead is a reasonable cost for centralized control. The 2026 roadmap includes optimizations to reduce context window consumption.

Which companies are investing in MCP for enterprise use?

Google launched fully-managed remote MCP servers on Google Cloud. Tyk shipped Tyk AI Studio. Autodesk contributed to MCP security specifications. Vertex AI Agent Builder added enhanced tool governance. These are production commitments, not experiments.

Should my team adopt MCP now or wait?

If you have fewer than five agents and a small team, direct integrations may serve you well today. If you’re scaling past ten agents, have compliance requirements, or need centralized auth and audit trails, start evaluating MCP gateway infrastructure now. The protocol is mature enough for production use, and the enterprise tooling ecosystem is shipping rapidly.

Take control of your AI agent governance

Ready to govern your AI agent infrastructure? Tyk’s API gateway and governance platform provide the control plane for MCP traffic, with built-in OAuth, rate limiting, and audit logging. Learn how Tyk is shaping MCP in practice and discover Tyk AI Studio.