The Model Context Protocol offers a standard connection between AI applications and external tools, data sources, and services. But that’s only half the solution. For any real-world enterprise application, you also need a control plane for security, traffic management, and observability to govern what flows through that connection.

What is an MCP gateway?

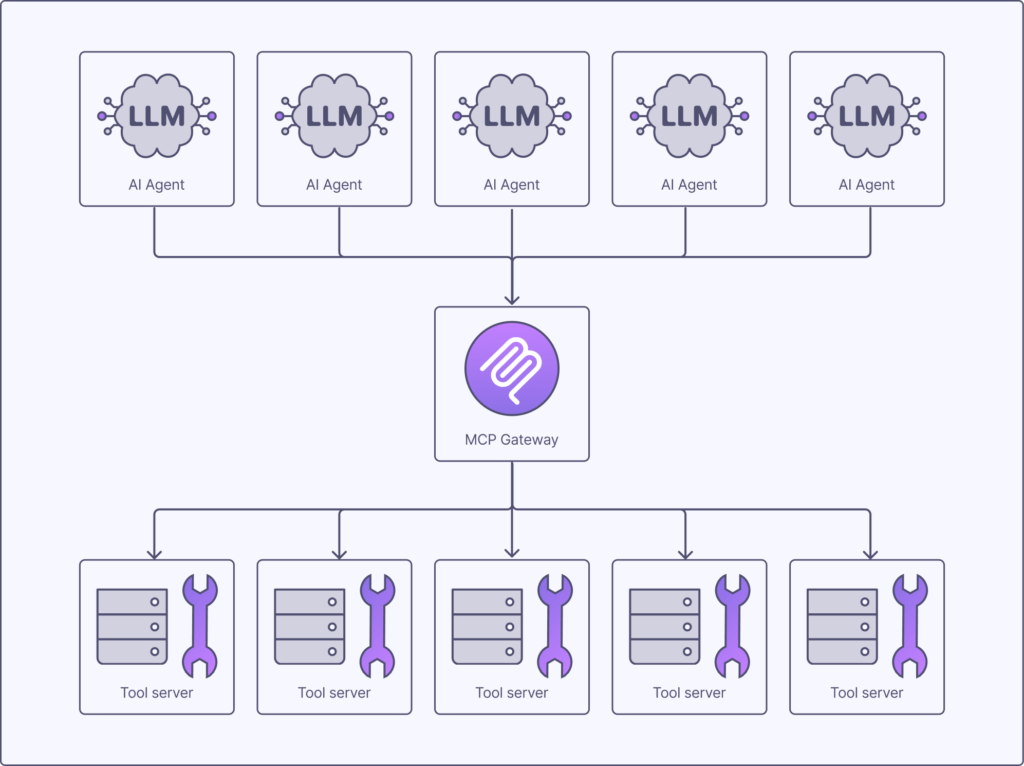

A Model Context Protocol gateway is an infrastructure component that serves as a centralized, secure middleware layer between AI applications (clients) and Model Context Protocol (MCP) servers.

- MCP defines how a single AI agent talks to a single data source.

- An MCP gateway acts as a single pane of glass that manages secure access to dozens or hundreds of MCP servers behind a single endpoint.

The MCP gateway functions as a reverse proxy, traffic controller, and security layer for agentic AI. This is essential for enterprise production environments where managing many individual connections is unsustainable.

Understanding MCP’s architecture: Hosts, clients, and servers

The MCP defines a simple but effective architecture based on three core components that manage the exchange of information:

- Host: The application that needs to access external context or functionality, such as an IDE or AI assistant like Claude Desktop. The host contains the AI model and initiates requests for tools or data on its behalf.

- Server: The application or service that provides the tools or data. Examples include a weather API, a database connector, or an internal service for booking meetings. The server exposes its capabilities over the MCP protocol.

- Client: The library or software component that facilitates communication between the host and the server. It handles the low-level details of the MCP protocol, allowing developers to focus on the application logic rather than the transport layer.

Why MCP alone isn’t enough for the enterprise

The Model Context Protocol provides a much-needed standard for communication. However, it’s insufficient for enterprise production environments because it’s a protocol, not a management or security framework. Relying on direct MCP connections between models and tools at scale introduces significant challenges in scalability, governance, and security that the protocol wasn’t designed to solve.

| Aspect | Model Context Protocol (MCP) | MCP gateway |

| Purpose | A communication standard; the “how” of a request-response cycle. | A management layer; the “who, what, where, and when” of tool access. |

| Focus | Interoperability between an AI host and a tool server. | Centralized security, governance, routing, and observability for all tool traffic. |

| Security | Defines an optional OAuth 2.1 framework for HTTP transports only. Auth is not mandated, the authorization server is explicitly out of scope, and STDIO transport has no auth spec at all. | Centrally enforces auth across all agents and tools regardless of how each server is configured, with consistent policy, credential management, and runtime controls. |

| Management | No central management. Connections are point-to-point. | Offers a single point of control for managing all agents and tools. |

| Observability | No unified logging or metrics. | Provides a complete audit trail, metrics, and tracing for all requests. |

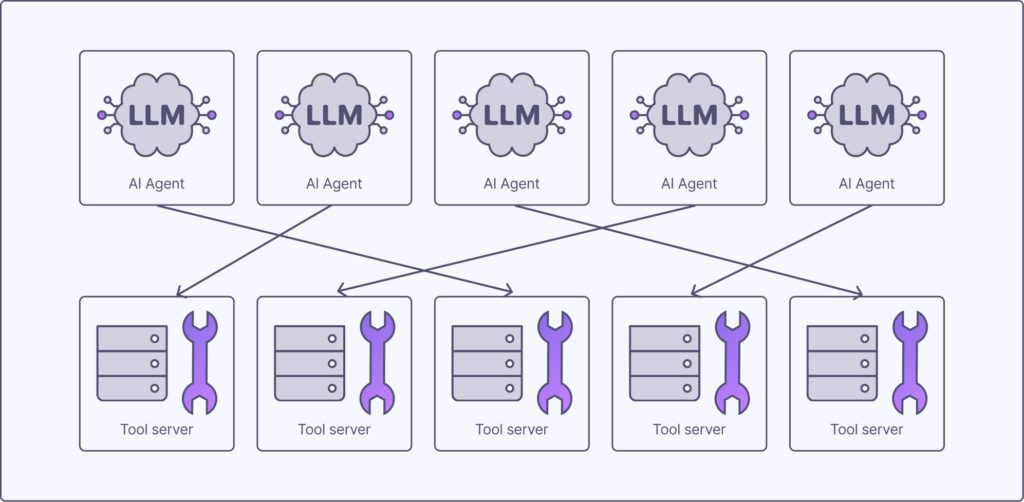

The scaling challenge: Managing dozens of agents and tools

In a simple scenario with one AI agent and one or two tools, direct connections are manageable. But as an organization deploys dozens (or hundreds, or thousands…) of agents that need to connect to hundreds of internal and external tool servers, this architecture quickly devolves into point-to-point chaos.

Each agent needs to be configured with the specific addresses and credentials for every tool it might use, creating a massive operational burden. There is no central visibility into which agents are using which tools. A simple change, like updating a tool’s endpoint or deprecating a version, requires hunting down and updating every single agent that consumes it. This tight coupling makes the system fragile and difficult to maintain, slowing down development and increasing the risk of outages.

The governance gap: A lack of centralized enforcement, auditing, and policy control

The most critical limitation of MCP in the enterprise is the governance gap. MCP standardizes communication and defines an optional OAuth 2.1 authorization framework, but it is not a management or enforcement solution. Each server independently chooses whether to implement auth, the authorization server is explicitly outside the spec’s scope, and there is no central place to manage policies, audit usage, or enforce controls across your entire fleet of agents and tools. This leaves a dangerous security vacuum when connecting powerful AI agents to sensitive corporate data and systems.

Without a control plane, you face several key risks:

- Unauthenticated access: Even where MCP defines an auth framework, implementation is optional and per-server. There is no central way to verify agent identity or guarantee that every tool in your fleet requires authentication before granting access.

- Authorization failures: There is no central place to enforce rules about which agent can use which tool. A sales-focused agent might inadvertently gain access to sensitive financial data from a tool meant for the finance team.

- No audit trail: In a direct connection model, there is no unified log of which agent invoked a tool, with what inputs, and what the outcome was. This makes compliance reporting, debugging, and security forensics nearly impossible.

- Lack of cost and usage controls: You cannot enforce rate limits or usage quotas. A malfunctioning or runaway agent could overwhelm a backend service with requests, causing an outage or incurring massive costs from a third-party API.

- Prompt injection vulnerabilities: A malicious actor could compromise a tool to return a cleverly crafted response. When fed back into the model’s context, this could trick the agent into performing unauthorized actions. A direct connection offers no place to inspect and sanitize this data in transit.

Managing these risks requires a centralized enforcement point, a role that the protocol itself does not fill. To build a secure and compliant AI infrastructure, you must introduce a layer that governs this traffic. For more on this, see how a zero-trust architecture applies to modern API security.

How an MCP gateway works: An architectural deep dive

The architectural difference before and after implementing an MCP gateway is stark. It transforms a chaotic, unmanaged system into an organized, secure, and observable one.

Before the gateway (point-to-point chaos)

In this model, every agent maintains a direct, independent connection to every tool it needs. This network is difficult to secure, monitor, manage, and scale.

After the gateway (centralized control)

Here, all agents connect to a single, logical endpoint: the gateway. The gateway becomes the sole orchestrator of traffic, intelligently routing requests to the correct upstream services.

The request lifecycle through a gateway follows these steps:

- Request initiation: An AI host needs to use a tool, so sends an MCP request to the gateway’s endpoint.

- Authentication and authorization: The gateway first authenticates the agent’s identity, often using a standard mechanism like an API key, OAuth token, or mTLS certificate. It then checks its policies to authorize the request, ensuring this specific agent is allowed to use the requested tool with the provided inputs.

- Policy enforcement: The gateway applies other configured policies. This could include rate limiting to prevent abuse, transforming the request payload, or validating inputs against a predefined schema.

- Intelligent routing: Based on the tool name, version, or other metadata in the request, the gateway routes the traffic to the correct backend tool server.

- Response handling: The tool server processes the request and sends its response back to the gateway.

- Logging and sanitization: The gateway logs the entire transaction for auditing and observability. It can also inspect the response from the tool, sanitizing it to strip potential prompt injection code before returning it to the AI host.

The five core capabilities of an enterprise MCP gateway

A true enterprise-grade MCP gateway provides a suite of capabilities that go far beyond simple request forwarding. These are the five core functions to look for:

| Capability | Core function |

| Unified access and routing | Decouples agents from tools, providing a single entry point for intelligent traffic management. |

| Centralized security | Enforces consistent authentication and authorization policies across the entire AI ecosystem. |

| Dynamic tool discovery | Acts as a central catalog for agents to find and understand available tools. |

| Comprehensive observability | Generates detailed logs, metrics, and traces for a complete audit trail and easy debugging. |

| Performance and reliability | Improves system stability through caching, load balancing, and circuit-breaking patterns. |

Comparison of MCP gateway approaches

| Feature | Build (custom) | Open source | Commercial API gateway |

| Initial cost | Very high (engineering time) | Low (infrastructure only) | Medium (licensing fees) |

| Total cost of ownership | Very high (maintenance, updates) | Medium (operational overhead) | Lower (predictable fees, less overhead) |

| Time to production | Slow (6-12+ months) | Moderate (weeks to months) | Fast (days to weeks) |

| Security features | Custom-built | Basic (API keys, simple auth) | Advanced (OIDC, mTLS, granular policies) |

| Scalability and high availability | Custom-engineered | Varies; often requires manual setup | Built-in, enterprise-grade clustering |

| Support and maintenance | Entirely in-house | Community-based, best-effort | Professional 24/7 support with SLAs |

Practical use cases and implementation patterns

MCP gateways are used in the real world to solve concrete business problems, primarily by enabling secure and managed access between autonomous agents and enterprise systems. They are the critical bridge that allows AI to move from a sandboxed experiment to a production-grade business tool.

Use case: The enterprise AI assistant

Imagine an internal chatbot or AI assistant designed to help employees with common tasks. This agent needs access to a wide array of internal systems: the HR system to look up vacation balances, the financial database to check on an expense report, and the IT support platform to create a new ticket.

Without a gateway, this would be a security nightmare. With an MCP gateway, the architecture becomes secure and manageable. The gateway:

- Authenticates the employee interacting with the chatbot via the company’s single sign-on (SSO) provider.

- Enforces authorization policies, ensuring the agent can only access data that the specific employee is permitted to see. For example, a manager can query team vacation data, but an individual contributor cannot.

- Routes requests to the correct internal microservices, translating between MCP and whatever protocol the legacy system uses (e.g. REST, SOAP).

- Provides a complete audit trail of all actions taken by the agent on behalf of the user, which is essential for compliance and security reviews.

Use case: Multi-tenant SaaS platform

Consider a Software-as-a-Service (SaaS) company that wants to offer AI agent features to its customers. Each customer’s agent needs access to that customer’s private data and tools, but must be strictly isolated from other customers’ data.

An MCP gateway is the perfect tool for managing this multi-tenant environment. The gateway:

- Uses customer-specific API keys or OAuth tokens to identify which tenant is making a request.

- Dynamically routes the request to the correct, isolated tool server or database instance for that specific customer.

- Enforces usage quotas based on the customer’s subscription tier, preventing one customer from consuming an unfair share of resources.

- Provides per-tenant analytics, allowing the SaaS provider to monitor usage and bill customers accurately.

Frequently asked questions

What is the difference between an MCP gateway and an API gateway?

An MCP gateway is a specialized form of API gateway configured specifically for the Model Context Protocol. While a traditional API gateway is designed to manage REST, GraphQL, and other web protocols, an MCP gateway understands the specific structure of MCP requests for tools and resources. This allows it to apply more intelligent, context-aware policies tailored to AI agent interactions. Some modern, universal API management platforms can be configured to function as powerful MCP gateways.

Is an MCP gateway a replacement for RAG or function calling?

An MCP gateway is not a replacement for RAG or function calling; it is a management and security layer that governs them. RAG and function calling are techniques for providing data and tools to an LLM, while MCP is a protocol to standardize how those tools are requested. The gateway sits in front of the services that implement these techniques to secure, manage, and observe their usage at scale.

Does every project using MCP need a gateway?

Not every project using MCP needs a gateway from day one. For a simple proof-of-concept involving a single agent and one trusted, internal tool, direct communication might be sufficient to get started. However, a gateway becomes essential as soon as you need to manage multiple agents, centrally enforce security policies, connect to production systems with sensitive data, or gain visibility into tool usage and performance.

Can an MCP gateway help defend against prompt injection?

An MCP gateway can significantly help mitigate the risks of prompt injection. It acts as a security checkpoint to validate and sanitize the data returned from a tool before it reaches the AI model’s context window. By enforcing a strict schema on tool responses, the gateway can strip out malicious instructions, unexpected code, or other harmful content that could be used to compromise the agent.

Who are the main providers of MCP gateway solutions?

The MCP gateway market is still emerging, with solutions coming from several categories. Key players include dedicated open-source projects designed specifically for MCP, established API management vendors who are extending their universal gateway platforms to support the protocol, and specialized AI infrastructure companies building comprehensive MLOps and governance platforms.

Conclusion

The Model Context Protocol successfully standardizes how AI agents connect to the tools and data they need to function. However, the protocol is not a management or enforcement solution. Defining an optional authorization framework is not the same as centrally governing who can access what, when, and why.

Ready to build a secure and scalable foundation for your AI applications? Speak to the team about how Tyk’s universal API management platform provides the control and flexibility you need to manage any protocol, from REST to MCP.