The Model Context Protocol standardizes how AI shares data and integrates with systems. Read on for a definition of MCP and to discover architectural considerations, use cases, and how MCP compares to related technologies such as RAG. You’ll also learn how to secure, manage, and observe MCP at scale with an API gateway.

What is Model Context Protocol (MCP)?

Launched by Anthropic in November 2024, the Model Context Protocol is an open standard for integrating AI with systems, tools, and data sources. Its open source framework supports the sharing of data between AI and previously siloed information sources and legacy systems. It enables AI applications to perform tasks and access the information required to do so, removing the constraints associated with large language models (LLMs) being isolated from data.

Some sources have likened MCP to OpenAPI, which is a specification that aims to describe APIs in a way that supports standardization and predictability.

Solving the ‘N x M’ problem for AI

By sharing data in a standardized way between AI and business management tools, workflows, content repositories, development environments, and more, the Model Context Protocol solves the N x M data integration problem.

The N x M problem is where N = the number of data sources and M = the number of data consumers. Having to connect every source directly to every consumer results in N x M individual integrations. This comes with a high maintenance cost and makes it difficult to add new systems, making scaling harder. It also introduces the risk of inconsistency, with different integrations transforming data differently, driving up the need for tribal knowledge in maintaining increasingly brittle systems.

The vendor-neutral MCP attempts to solve this for AI, standardizing data integrations and reducing the need for bespoke, point-to-point connections. By providing a common interface, it enables models to access and interact with diverse tolls and data sources in a way that’s both efficient and scalable.

The USB-C analogy for AI connectivity

Remember how USB-C came along and provided much-needed standardization for how we connect devices? MCP is like that for AI, with chat interfaces, integrated development environments (IDEs), code editors, and other AI applications on one side, and data systems and tools on the other.

How MCP works: A look at the architecture

The protocol works by providing several components within a clear structure, including an MCP host, client, and server, with a transport layer for communication flow.

The core components: Host, client, and server

The MCP host is an AI environment or application that contains the large language model. This can be a conversational AI or IDE. Examples include Claude Desktop, Cursor, and Windsurf. This is the point where the user interacts with the AI.

The MCP client enables the host and server to communicate, translating their requests and replies, as well as finding available servers. It sits within the MCP host. MCP includes a list of 100+ clients on its site, making it easier for organizations to add MCP support to their applications.

LLMs require the provision of context, data, and capabilities, which is where the MCP server component comes in. It translates responses and requests between the LLM and the external tools and systems it needs to communicate with. Companies building production-ready MCP servers for their platforms have official integrations on GitHub.

The communication flow: The transport layer (JSON-RPC)

Communication between the client and server is facilitated by the transport layer, which uses JSON-RPC 2.0 messages. The standard input/output (stdio) transport method provides speedy, synchronous message transmission, making it ideal for local resources, while server-sent events (SSE) facilitate real-time data streaming that works well for remote resources.

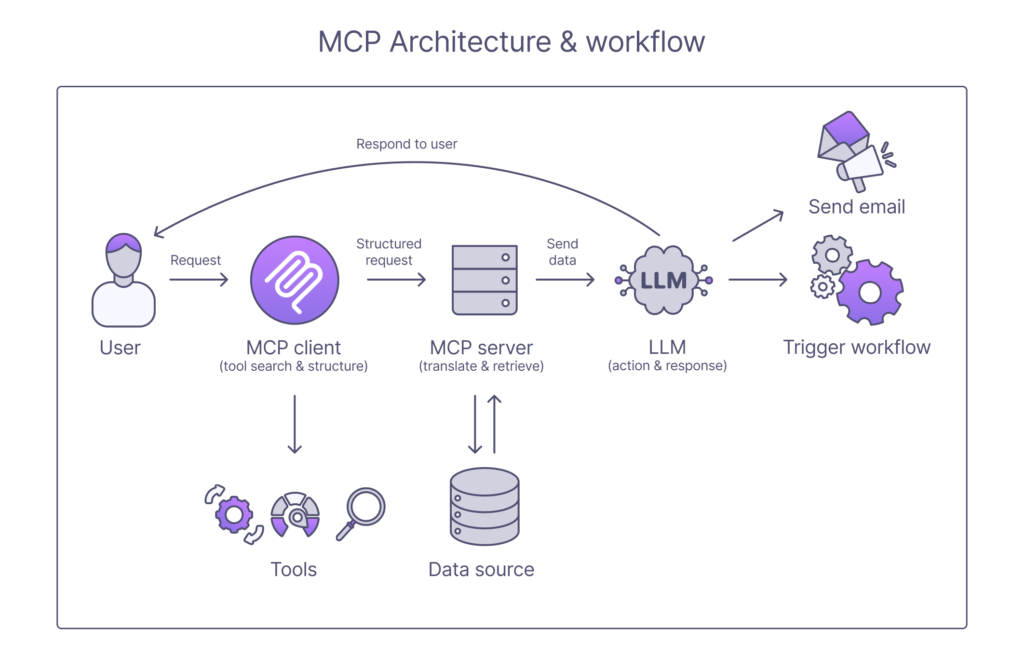

A visual guide to an MCP request

When MCP receives a request, it uses the above architecture to facilitate a response. First, the MCP client searches for the tools it needs to fulfill the request, before structuring a request to use them and sending it to the MCP server. The server translates the request into whatever format the data source requires, retrieves the data and sends it back to the LLM. The LLM can then take whatever action it needs, such as triggering a workflow, sending an email, or incorporating the data into its response to the user.

MCP vs. RAG vs. function calling: What’s the difference?

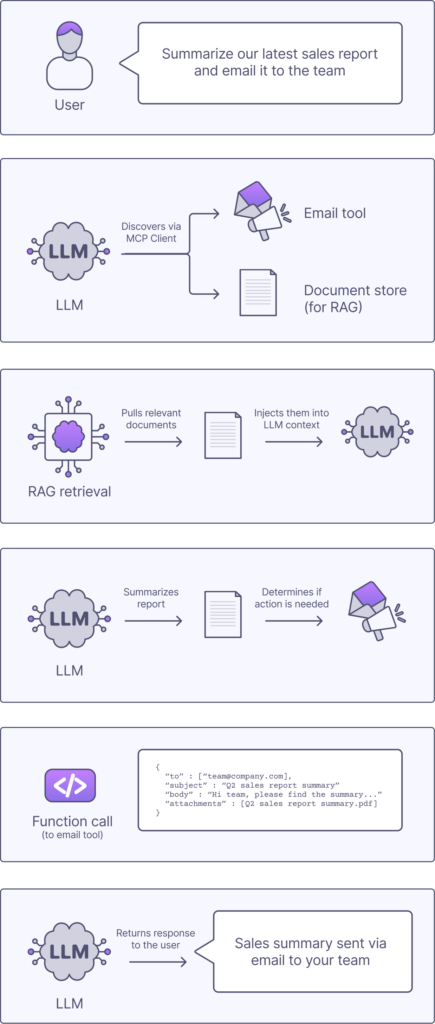

MCP is not the only way to enhance and integrate an LLM with data. You can also use retrieval-augmented generation (RAG) and function calling. Each operates at a different layer of the LLM stack to solve complementary problems:

- MCP focuses on standardization and orchestration

- RAG focuses on knowledge retrieval

- Function calling is about taking action

Key architectural differences

As detailed above, the system-centric MCP approach includes the MCP host, client, and server, loosely coupled via the standardized protocol.

RAG works differently, with a data-centric approach that uses the LLM, an embedding model, a vector database, and a retrieval pipeline. They are tightly coupled to a specific data store/retrieval pipeline. The query flows from the LLM to the embedding model, which retrieves relevant documents, injects into a prompt, and generates a response.

Function calling works by using function schema definitions and an execution layer with the LLM. The user inputs the query and the LLM selects the function. It sends a structured JSON call to the external system, which executes and returns the result to the LLM. This action-centric architecture is tightly coupled per function/tool, with each integration defined separately.

When to use each approach

Each of these approaches serves a different purpose.

| Approach | Role | Purpose |

| MCP | Infrastructure layer | Providing a standard way to access tools and data |

| RAG | Context layer | Fetching relevant knowledge |

| Function calling | Execution layer | Executing specific tools |

How they can work together

As MCP, RAG, and function calling all serve different purposes, you can use them together; they are complementary layers rather than competing choices. You can apply MCP to standardize access to tools and data sources, add RAG to provide relevant context from documents, the use function calling to perform actions in external systems.

Securing and managing MCP servers at scale with an API gateway

Using MCP makes it easier to scale your AI implementation in a way that’s simple (all things being relative) and interoperable. Using an AI-ready API gateway means you can manage and secure your MCP servers efficiently and consistently.

Tyk AI Studio is a leading example of an AI gateway. The open-core gateway enables you to route, govern, and secure all AI traffic across your organization, with every AI request flowing through the gateway and every policy enforced there.

By providing a single control plane for LLMs, agents, MCP tool chains, and RAG workloads, the AI gateway delivers crucial consistency and predictability.

Why MCP servers are the new API endpoints

MCP servers are often described as the “new API endpoints.” That’s because they abstract and standardize what APIs traditionally expose. However, MCP servers don’t replace APIs, they sit on top of them, meaning a robust API foundation is key to AI success. (For more on AI, APIs and AI readiness, download the free strategic blueprint for enterprise.)

MCP servers building on API capabilities to solve many scaling and usability limitations. Key differences are highlighted in the comparison table below.

| Traditional APIs | MCP servers |

| Expose functions to developers via hardcoded endpoints | Provide intelligent agents with endpoints for self-describing capabilities (which you configure, rather than hardcoding) |

| Require you to call a specific endpoint | Expose tools and resources in a discoverable, structured way |

| Require you to know the exact schema and logic | Can figure out what to use and how |

| Leads to the N x M problem, with each client having to integrate with each service individually (an API gateway can make this less complex) | Solves the N x M problem, enabling clients to talk to a standard protocol and handling translation to underlying systems |

| Developer-driven (developers decide when and how to call endpoints) | Model-driven (the LLM decides dynamically which tool to use, which data to fetch, and when to act |

| Static endpoints with no built-in discovery | Tools and data enumerated at runtime, with the model able to adapt to new capabilities without code changes |

| Returns raw JSON data for apps to process | Returns context – structured results for LLM consumption and reasoning |

| Execution layer | Interface layer |

Applying authentication and authorization (OAuth, API Keys)

When it comes to authentication and authorization, MCP and traditional API infrastructure complement each other. You can use the API gateway to authenticate users (with an API key or OAuth token) and authorize their scopes, roles, and permissions. This ensures robust security and access control for your APIs, including those that feed data to your LLM. The AI can then access those secured capabilities.

Gaining observability: Monitoring, logging, and analytics

An API gateway also supports the instrumentation of observability. This is an essential gain for your AI implementation. Observability means you can see what’s happening, where and why, which is essential for fast and effective troubleshooting.

Monitoring, logging, and the ability to analyze telemetry data also enable you to understand usage and develop and build products and services in response to that data.

A further benefit is that you can avoid opacity regarding AI decision-making and bias, which is particularly important for meeting compliance obligations.

Protecting your tools with rate limiting and quotas

An AI gateway doesn’t just log and monitor, it also protects. You can use it to apply a rate limit and quota to protect your services from becoming overwhelmed. This is essential because MCP makes it easy for an LLM to call whichever tool or API it likes. The dynamic nature of those calls can create a risk of too many requests and unexpected usage spikes impacting performance. Being able to define rate limits and quotas guards against this. You can also implement them to prevent the overuse of expensive or sensitive APIs.

Protecting your downstream services from overload and uncontrolled consumption in this way means you can limit requests and prevent bursty traffic to support reliable performance and control costs.

Real-world MCP use cases for the enterprise

At a headline level, MCP in the enterprise supports the secure connection between AI agent and internal data, tools, and SaaS apps. This can drive high return on investment through automation in everything from software development to customer support to data analytics. Let’s look at some real-world enterprise use cases.

Building internal developer platforms (IDPs) with AI

An internal developer platform is a primary resource for many enterprises, providing a single interface where developers can query infrastructure, trigger workflows, and access documentation and runbooks.

Building an IDP with AI means you can expose internal systems (such as Git, CI/CD, cloud APIs, and observability tools) with MCP as standardized capabilities. This means the LLM can discover and interact with them dynamically, instead of the enterprise needing hardcoded, bespoke integrations.

Using MCP in this way means the enterprise’s IDP becomes a unified AI interface over the entire engineering stack, delivering significant benefits in terms of efficiency, collaboration, and reusability.

Imagine, for example, that you needed to deploy the latest version of a service to staging and check for errors. You can issue the request to do so, which will prompt MCP to discover the most appropriate deployment tool and logging and monitoring system. It will execute the deployment via function calling, use RAG to pull logs and runbooks, then summarize the outcome for you.

Creating secure enterprise chatbots

Chatbots can be an excellent tool for accessing internal data (such as HR, CRM, and finance systems), as well as for performing actions like submitting requests and updating records.

For enterprise-grade chatbots, MCP serves as a controlled access layer between the LLM and enterprise systems. With only approved tools and data exposed, it can support secure and compliant access, working alongside an API gateway providing authentication, authorization, rate limiting, and auditing.

A staff member could, for example, ask the chatbot what their remaining paid time off (PTO) allowance was and if they can book a particular day off. The MCP will use RAG-style retrieval to gather the PTO data, then function calling to trigger action in the HR system. The API gateway will enforce permissions as part of this, ensuring access is authorized and within scoped permissions.

The process avoids the chatbot having direct, messy access to multiple systems. Instead, it applies a governed, standardized interface that allows secure access according to relevant permissions.

Orchestrating complex multi-agent workflows

It’s also possible to run more complex MCP use cases within the enterprise, using the technical backdrop of MCP to orchestrate multi-agent workflows. Doing so turns MCP into a tool that makes orchestration scalable, with agents sharing a common capability layer.

Let’s say an enterprise wants an AI system that can coordinate multiple agents for research, planning, and execution across multiple systems (encompassing data, APIs, and workflows):

- Agent 1, tasked with analysis, can use MCP to query analytics data with RAG.

- Agent2, responsible for diagnosis, can pull CRM and marketing data.

- Agent 3, focused on execution, can launch a campaign or adjust pricing using function calling.

In all three cases, the agents use MCP rather than custom integrations. This avoids the chaos of each agent needing is own integrations (the N x M problem again) by providing a common capability layer.

MCP turns fragmented, distributed enterprise systems into a unified, AI-accessible platform, enabling developers, users, and agents to interact with the business through a single, intelligent, contextual interface. It does so across multiple services spread across teams, clouds, and regions, providing a level of reach that is essential to modern organizations.

With global firms such as McKinsey increasingly embracing AI agents by the tens of thousands, these real-world, actionable enterprise use cases are becoming increasingly essential to organizations’ ability to compete. At the same time, the shift to agentic AI is highlighting the need for robust security and governance to avoid the exposure not just of data but of capabilities – as McKinsey’s March 2026 Lilli breach highlighted.

AI governance for the enterprise

Being able to connect AI to data sources and business tools using a standard protocol opens a whole new world of possibilities. It also raises the specter of increased and newly emerging security risks, making both API governance and AI governance critical.

With the question of “What is Model Context Protocol (MCP)” put to bed, the open-core Tyk AI Studio can support you to route, govern, and secure your AI traffic. Designed to deliver an AI gateway for the agentic era, it delivers full lifecycle control over every AI interaction, including complete audit trails for prompts, responses, and tool calls. AI traffic governance also includes personally identifiable information (PII) redaction and content filtering enforced at the gateway.

Tyk AI Studio also includes intelligent model routing, RAG and data governance, and powerful cost attribution and budget enforcement capabilities, ensuring enterprises can tackle spiraling AI costs head-on. It’s essentially a programmable control plane that evolves, adapts, and scales alongside your business needs.

Speak to the Tyk team to find out more and test your use case.