Distributed traces provide a detailed, end-to-end view of a single API request or transaction as it traverses through various services and components. Traces are crucial for understanding the flow of requests and identifying bottlenecks or latency issues. Here’s how you can make use of traces for API observability:Documentation Index

Fetch the complete documentation index at: https://tyk.io/docs/llms.txt

Use this file to discover all available pages before exploring further.

- End-to-end request tracing: Implement distributed tracing across your microservices architecture to track requests across different services and gather data about each service’s contribution to the overall request latency.

- Transaction Flow: Visualize the transaction flow by connecting traces to show how requests move through different services, including entry points (e.g., API gateway), middleware and backend services.

- Latency Analysis: Analyze trace data to pinpoint which service or component is causing latency issues, allowing for quick identification and remediation of performance bottlenecks.

- Error Correlation: Use traces to correlate errors across different services to understand the root cause of issues and track how errors propagate through the system.

OpenTelemetry Tracing

Since v5.2, Tyk Gateway supports distributed tracing via OpenTelemetry. The gateway exports traces using the OpenTelemetry Protocol (OTLP), making it compatible with any modern tracing backend: Jaeger, Datadog, Dynatrace, Elasticsearch, New Relic, and others. Tyk also supports the legacy OpenTracing approach (now deprecated). Migrate to OpenTelemetry for vendor-neutral, actively maintained tracing.Configuration

Enable Tracing

Enable OpenTelemetry tracing at the Gateway level intyk.conf:

- Config File (tyk.conf)

- Environment Variable

Reference

The root-level

opentelemetry.enabled, opentelemetry.exporter, and opentelemetry.endpoint fields are deprecated from Tyk 5.13.0.They continue to work for backward compatibility but should be replaced with the opentelemetry.traces.* equivalents in new deployments.| Field | Description | Default |

|---|---|---|

| opentelemetry.traces.enabled | Enable distributed tracing | false |

| opentelemetry.traces.exporter | Export protocol: grpc or http | grpc |

| opentelemetry.traces.endpoint | OTLP collector endpoint | localhost:4317 |

| opentelemetry.traces.connection_timeout | Connection timeout in seconds | 1 |

| opentelemetry.traces.headers | Additional HTTP headers sent with each OTLP export request | — |

| opentelemetry.traces.tls | TLS configuration for the OTLP connection | — |

| opentelemetry.traces.context_propagation | Trace context format: tracecontext, b3, custom, or composite | tracecontext |

| opentelemetry.traces.custom_trace_header | Custom propagation header name (used with custom or composite modes) | — |

| opentelemetry.traces.span_processor_type | Span processing mode: batch or simple | batch |

| opentelemetry.traces.span_batch_config.max_queue_size | Maximum number of spans buffered before export | 2048 |

| opentelemetry.traces.span_batch_config.max_export_batch_size | Maximum number of spans per export batch | 512 |

| opentelemetry.traces.span_batch_config.batch_timeout | Seconds to wait before forcing an export | 5 |

| opentelemetry.traces.sampling.type | Sampling strategy: AlwaysOn, AlwaysOff, or TraceIDRatioBased | AlwaysOn |

| opentelemetry.traces.sampling.rate | Fraction of traces to sample when using TraceIDRatioBased (0.0–1.0) | 0.5 |

| opentelemetry.traces.sampling.parent_based | Inherit the parent span’s sampling decision | false |

Detailed Tracing

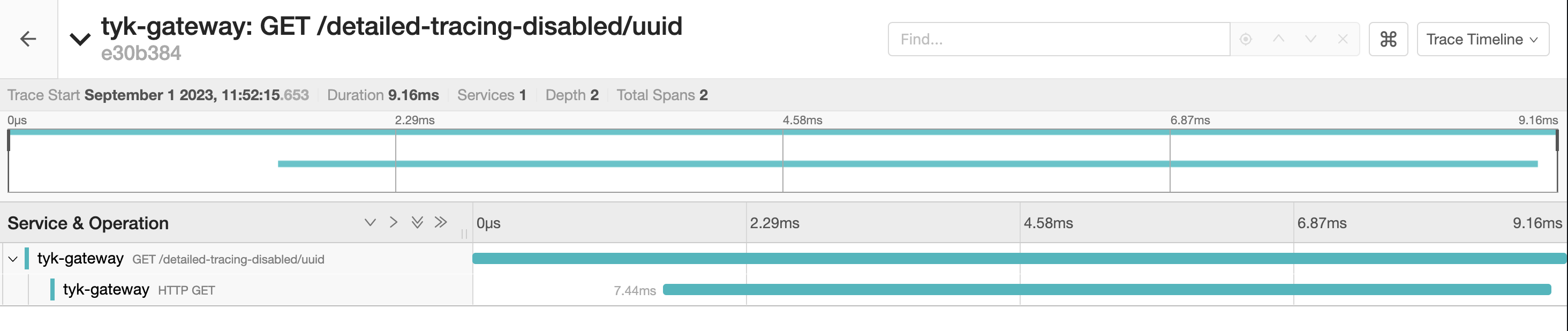

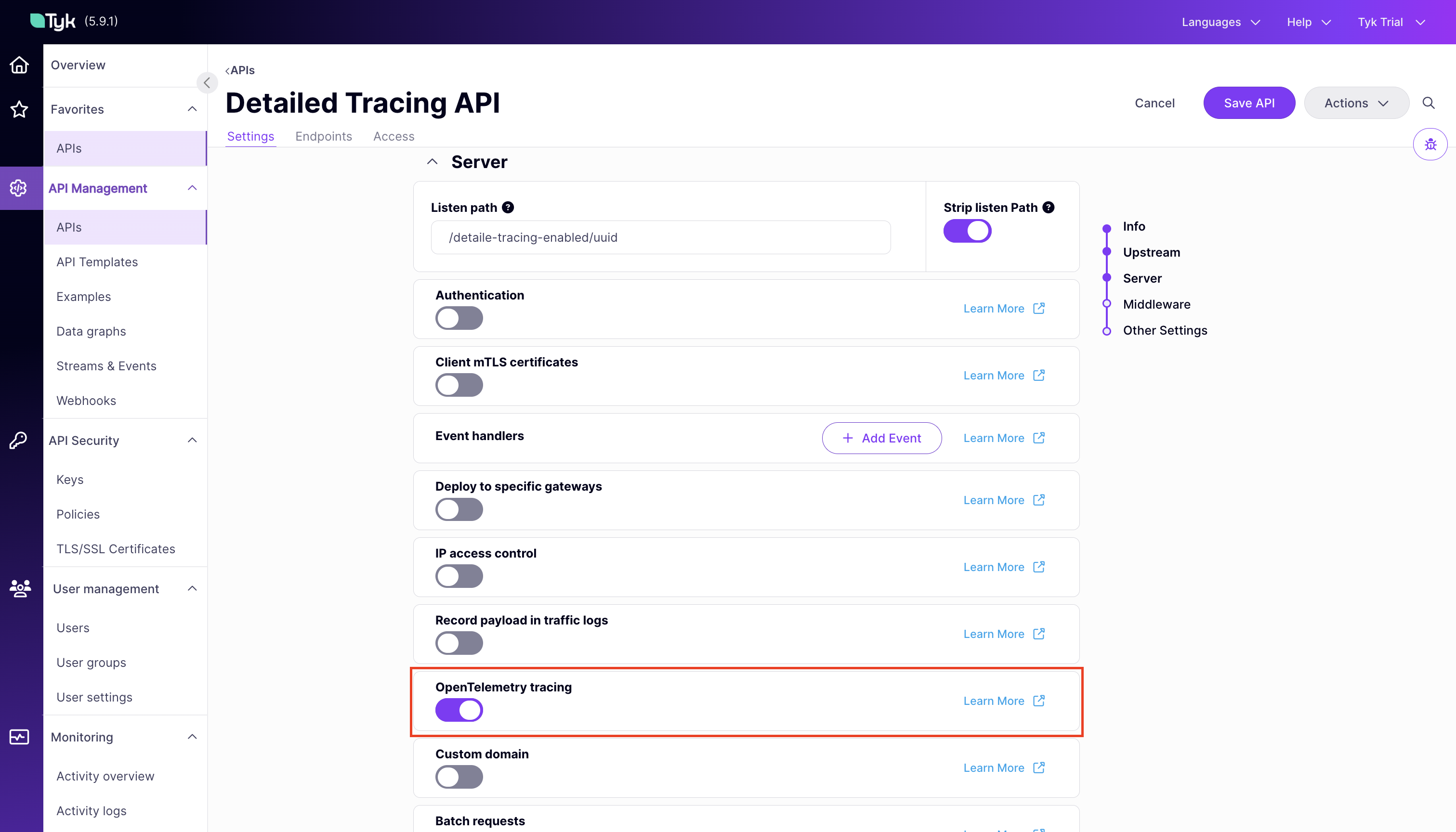

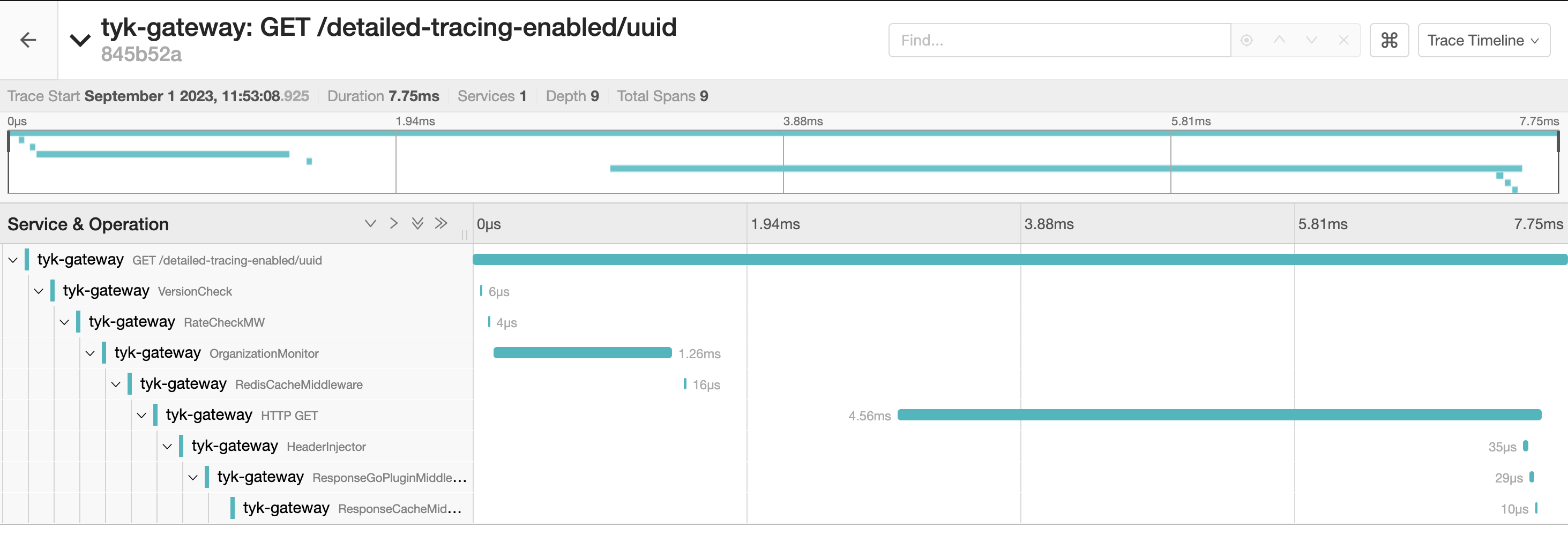

Enable detailed tracing per API to generate a span for each middleware in the request pipeline. These spans offer detailed insights, including the time taken for each middleware execution and the sequence of invocations. Set the server.detailedTracing flag in the Tyk OAS API definition, or toggle OpenTelemetry Tracing in the Tyk OAS API Designer.

Span Processor Configuration

A span processor controls how completed spans are batched and sent to the tracing backend. This is configured in the Tyk Gateway configuration file underopentelemetry.traces.

When using span_processor_type: batch (the default), you can tune the batch processor to avoid span loss under high traffic. Use the span_batch_config block to configure the following fields:

| Field | Default | Description |

|---|---|---|

| `max_queue_size | 2048 | maximum number of spans buffered before export |

| `max_export_batch_size | 512 | maximum number of spans per export batch |

| `batch_timeout | 5 | seconds to wait before forcing an export |

max_queue_size and max_export_batch_size in high-throughput environments where spans are being dropped before they can be exported.

Understanding The Traces

Tyk Gateway exposes a helpful set of span attributes and resource attributes with the generated spans. These attributes provide useful insights for analyzing your API requests. A clear analysis can be obtained by observing the specific actions and associated context within each request/response. This is where span and resource attributes play a significant role.Span Attributes

A span is a named, timed operation that represents an operation. Multiple spans represent different parts of the workflow and are pieced together to create a trace. While each span includes a duration indicating how long the operation took, the span attributes provide additional contextual metadata. Span attributes are key-value pairs that provide contextual metadata for individual spans. Tyk automatically sets the following span attributes:tyk.api.name: API name.tyk.api.orgid: Organization ID.tyk.api.id: API ID.tyk.api.path: API listen path.tyk.api.tags: If tagging is enabled in the API definition, the tags are added here.tyk.api.apikey.alias: The identity alias of the authenticated client. Populated for APIs using JWT authentication or multi-auth (compliant mode).

Resource Attributes

Resource attributes provide contextual information about the entity that produced the telemetry data. Tyk exposes following resource attributes:Service Attributes

The service attributes supported by Tyk are:| Attribute | Type | Description |

|---|---|---|

service.name | String | Service name for Tyk API Gateway: tyk-gateway |

service.instance.id and tyk.gw.id | String | The Node ID assigned to the gateway. Example solo-6b71c2de-5a3c-4ad3-4b54-d34d78c1f7a3 |

service.version | String | Represents the service version. Example v5.2.0 |

tyk.gw.dataplane | Bool | Whether the Tyk Gateway is hybrid (slave_options.use_rpc=true) |

tyk.gw.group.id | String | Represents the slave_options.group_id of the gateway. Populated only if the gateway is hybrid. |

tyk.gw.tags | []String | Represents the gateway segment_tags. Populated only if the gateway is segmented. |

Common HTTP Span Attributes

Tyk follows the OpenTelemetry semantic conventions for HTTP spans. You can find detailed information on common attributes here. Some of these common attributes include:http.method: HTTP request method.http.scheme: URL scheme.http.status_code: HTTP response status code.http.url: Full HTTP request URL.

Advanced Configuration

Context Propagation

This setting allows you to specify the type of context propagator to use for trace data. This is essential for ensuring compatibility and data integrity between different services in your architecture.- Config File (tyk.conf)

- Environment Variable

opentelemetry.traces.context_propagation controls which trace context format the gateway reads from incoming requests and writes to upstream requests.

| Value | Behavior |

|---|---|

tracecontext (default) | W3C Trace Context format |

b3 | B3 multi-header format |

custom | Reads and writes only the custom header set in custom_trace_header. No standard headers written to upstream |

composite | Reads from the custom header (priority) or standard headers (fallback). Writes both the custom header and traceparent to upstream |

custom and composite are available from Tyk Gateway v5.12.0 and require opentelemetry.custom_trace_header to be set.Custom Trace Header

If your upstream systems use a proprietary correlation header (for example,X-Correlation-ID), set custom_trace_header to that header name. The behavior depends on context_propagation:

- Tracecontext

- Custom

- Composite

Tracecontext mode (

tracecontext + custom_trace_header): reads from the custom header (with fallback to traceparent), writes only the standard traceparent header to upstream.Sampling

Tyk supports configuring the following sampling strategies using opentelemetry.sampling intyk.conf.

Sampling Type

This setting dictates the sampling policy that OpenTelemetry uses to decide if a trace should be sampled for analysis. The decision is made at the start of a trace and applies throughout its lifetime. By default, the setting isAlwaysOn.

Set the opentelemetry.traces.sampling.type field in the Tyk Gateway configuration file or use the equivalent environment variable. Allowed values for this setting are:

| Value | Behavior |

|---|---|

AlwaysOn (default) | All traces are sampled |

AlwaysOff | No traces are sampled |

TraceIDRatioBased | Samples a fraction of traces based on the configured sampling rate |

Sampling Rate

The opentelemetry.traces.sampling.rate field is used to control what fraction of total traces will be sampled when theTraceIDRatioBased sampling is configured. It accepts a value between 0.0 and 1.0. For example, a rate set to 0.5 implies that approximately 50% of the traces will be sampled. The default value is 0.5.

- Configuration File: Update the

opentelemetry.traces.sampling.ratefield in the configuration file.

ParentBased Sampling

Parent based sampling ensures sampling consistency between parent and child spans. Specifically, if a parent span is sampled, all its child spans will be sampled at the same rate. This is particularly effective when used withTraceIDRatioBased sampling, as it helps to keep the entire transaction story together. Using ParentBased with AlwaysOn or AlwaysOff may not be as useful, since in these cases, either all or no spans are sampled.

Enable parent based sampling by setting opentelemetry.traces.sampling.parent_based or the equivalent environment variable. The default value is false.

Tracing Backends

For step-by-step setup guides connecting Tyk Gateway traces to a specific backend: All configuration options are documented in the Tyk Gateway configuration reference.OpenTracing (deprecated)

Enabling OpenTracing

Configure OpenTracing at the Gateway level intyk.conf:

| Field | Environment variable | Description |

|---|---|---|

tracing.enabled | TYK_GW_TRACER_ENABLED | Set to true to enable tracing |

tracing.name | TYK_GW_TRACER_NAME | Name of the supported tracer |

tracing.options | TYK_GW_TRACER_OPTIONS | Key-value pairs for configuring the tracer. See the tracer’s documentation for details |

Legacy Vendor Configurations

- Jaeger

- New Relic

- Zipkin

Tyk’s OpenTelemetry tracing works with Jaeger. Follow the Jaeger guide instead.