Introduction

Tyk Gateway produces logs that capture internal events, errors, warnings, and details of request processing. In Kubernetes environments, these logs are written tostdout/stderr and captured by the container runtime, but they are ephemeral by default. Without a log collection strategy, critical operational data is lost when pods are restarted or evicted.

The OpenTelemetry Collector provides a vendor-neutral way to collect, process, and export logs from your Tyk Gateway pods to any supported backend. By using the Filelog Receiver, the Collector can tail container log files on each Kubernetes node and forward them to a log analytics backend such as Elasticsearch.

This guide walks you through deploying the OpenTelemetry Collector alongside Tyk on Kubernetes, configuring it to collect Gateway logs, and shipping those logs to Elasticsearch.

Architecture Overview

The following diagram illustrates how logs flow from Tyk Gateway containers through the OpenTelemetry Collector to Elasticsearch:Prerequisites

Before getting started, ensure you have the following:- Kubernetes with

kubectlconfigured - Helm 3+

- Elasticsearch Cluster

- Enterprise Edition License

- Basic familiarity with Otel Collector concepts

Instructions Overview

1. Install Tyk Stack on Kubernetes

For installing Tyk on Kubernetes, follow the Tyk Helm Charts installation guide.When installing Tyk stack, add this flag

--set tyk-gateway.gateway.log.format=json to configure the Gateway to output logs in JSON format.2. Deploy OpenTelemetry Collector

Add the OpenTelemetry Helm repository and install the Collector as a DaemonSet:otel-collector-values.yaml file. This configures the Collector in DaemonSet mode with the Filelog Receiver, and exports logs to Elasticsearch.

In the configuration below, replace the Elasticsearch host and password with your actual values.

Expandable

Pipeline Overview

This logs pipeline reads container logs directly from the node filesystem using thefilelog receiver, targeting Gateway pod log files.

The json_parser operator parses JSON-formatted log entries, extracting structured fields from the log body into attributes for richer filtering and analysis.

Each log entry is then enriched by the k8sattributes processor, which adds pod, namespace, node, container metadata, and all Kubernetes labels for better filtering and correlation.

The batch processor groups logs efficiently to reduce export overhead. Finally, the processed logs are sent to Elasticsearch, where they are indexed under k8s-logs for centralized search and analysis.

The above configuration sends logs to the

k8s-logs index in Elasticsearch. Before installing the collector, ensure the k8s-logs index is created in your Elasticsearch cluster.curl -u elastic:<replace_password> -X PUT "<replace_host>:9200/k8s-logs" -H 'Content-Type: application/json' -d'{"settings": {"index": {}}}'3. Verify Logs in Elasticsearch

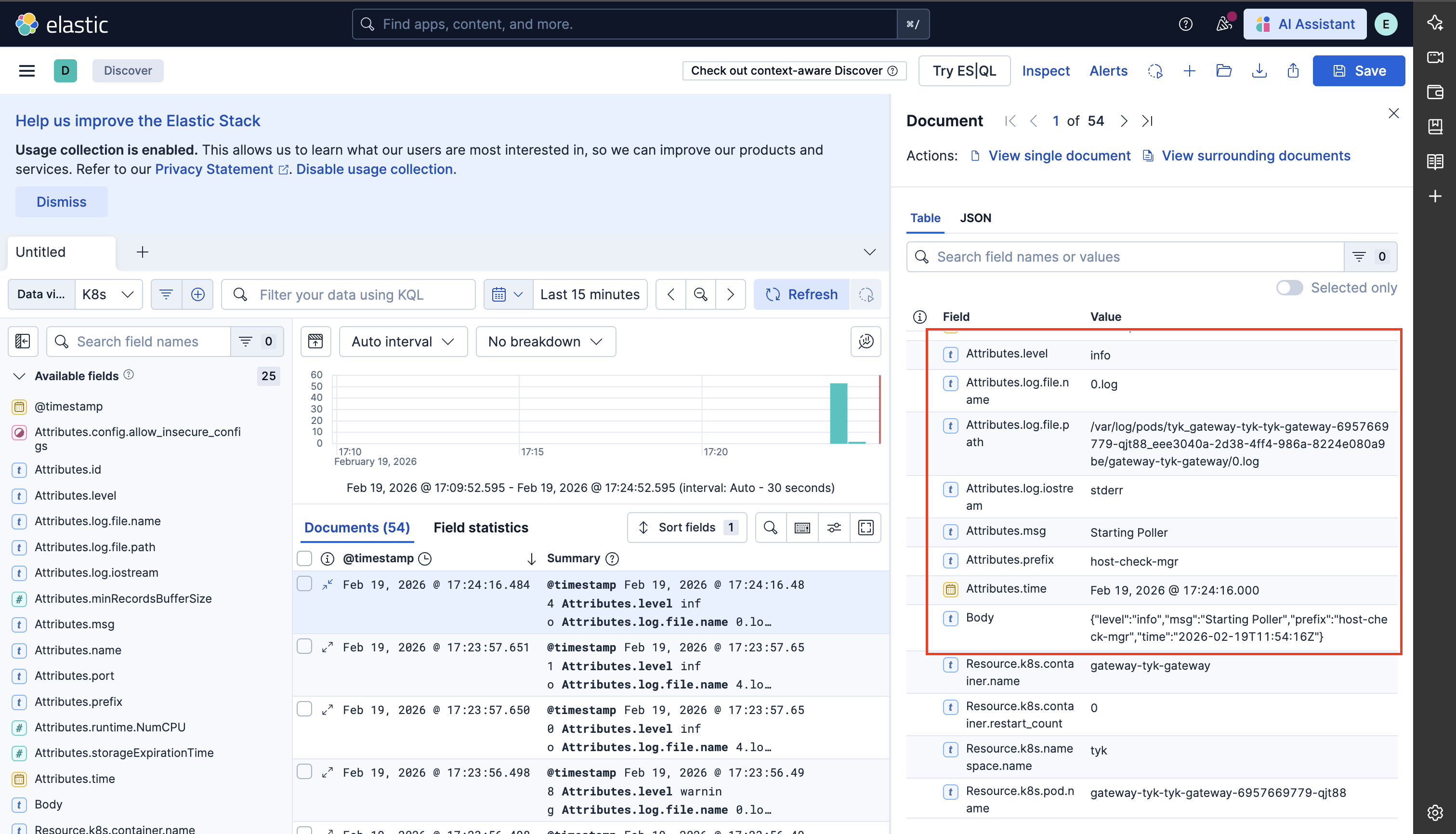

To view logs in Elasticsearch, you can use Kibana to create a data view for thek8s-logs index to visualize the logs.

Make some test requests to your Tyk Gateway to generate logs, then check Kibana for incoming log entries.